What does Word2Vec actually do?

Word2Vec is an NLP technique that learns numerical vector representations of words based on their surrounding words, so that similar words have similar vectors.

That is

If two words appear in similar sentences, Word2Vec makes their vectors similar.

Example:

- happy appears near excited

- pizza appears near burger

So Word2Vec learns:

- happy ≈ excited

- pizza ≈ burger

Word Embeddings as Feature Representation

Suppose we consider such values, for each feature

| Feature / Word | Boy | Girl | King | Queen | Apple | Mango |

| Gender | -1 | 1 | -0.95 | 0.93 | 0.01 | 0.05 |

| Royal | 0.01 | 0.02 | 0.95 | 0.96 | -0.02 | 0.02 |

| Age | 0.03 | 0.02 | 0.75 | 0.68 | 0.95 | 0.96 |

| Food | ~0 | ~0 | ~0 | ~0 | High | High |

| … | … | … | … | … | … | … |

| Dimension n | … | … | … | … | … | … |

Let us take our vocabulary:

Boy, Girl, King, Queen, Apple, Mango

In a hypothetical world (just for understanding), we can imagine that Word2Vec learns features like:

- Gender

- Royal

- Age

- Food

- … many more (actually ~300 dimensions in real models)

Each word gets a score for every feature.

What does our table show?

Each column is a word.

Each row is a hidden feature.

For example:

🔹 Gender feature

- Boy → −1 (male)

- Girl → +1 (female)

- King → −0.95 (male)

- Queen → +0.93 (female)

- Apple, Mango → near 0 (no gender)

So Word2Vec learns:

👉 Boy ≈ King (both male)

👉 Girl ≈ Queen (both female)

🔹 Royal feature

- King, Queen → very high values (~0.95)

- Boy, Girl → near zero

- Fruits → near zero

So the model understands:

👉 King and Queen are related by royalty.

🔹 Age feature

- King, Queen → higher (adult)

- Boy, Girl → lower (young)

So:

👉 King ≠ Boy (main difference is age).

🔹 Food feature

- Apple, Mango → high

- Humans → near zero

So:

👉 Apple ≈ Mango (both fruits).

What do we observe?

From these numbers, Word2Vec automatically learns:

- King, Queen are similar (royalty)

- Boy, Girl are similar (human + young)

- Apple, Mango are similar (food)

- King and Prince would be similar except for age (if Prince existed)

Meaning Arithmetic

Because meaning is stored as numbers, we can do math:

- King = Royal + Male + Adult

- Queen = Royal + Female + Adult

So:

King − Male + Female ≈ Queen

This is why Word2Vec can perform:

king − man + woman ≈ queen

It works because each vector contains semantic features.

So, Word2Vec does not just count words.

It learns properties of words (gender, category, relationships) from data.

Similar words get similar vectors.

In reality:

- These are NOT just 3–4 features.

- Each word usually has 100–300 hidden dimensions.

We only show a few for understanding.

What is Cosine Similarity (in Word2Vec)?

In Word2Vec, every word is a vector (arrow) in space.

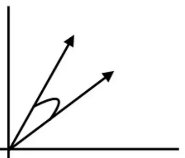

Suppose as shown in diagram, we have 2 vectors for 2 words,

Example:

- “king” → one vector

- “man” → another vector

Now the big question is:

👉 How do we know whether two words are similar?

We look at the ANGLE between the vectors.

That measurement is called:

✅ Cosine Similarity

Cosine similarity measures how close two word vectors are by checking the angle and distance between them.

Small angle → similar words

Large angle → different words

Small distance → similar words

Large distance → different words

Mathematically:

Cosine Similarity = cos(θ)

Distance = 1 − Cosine Similarity

Where θ = angle between the two vectors.

Case 1:

Imagine:

- One vector = king

- Second vector = man

They point almost in the same direction.

Let’s say the angle between them is:

👉 45°

So, cos(45°) = 1/√2 ≈ 0.707

Cosine Similarity = 0.707 (high)

Now calculate distance:

Distance = 1 − 0.707 = 0.29

What does this mean?

- Similarity ≈ 0.7 (quite high)

- Distance ≈ 0.29 (small)

So we say:

👉 “king” and “man” are similar words.

Case 2: Not similar words (90° apart)

Now imagine two vectors:

- One points right

- One points up

They form 90° (right angle).

cos(90°) = 0

So:

Cosine Similarity = 0

Now distance:

Distance = 1 − 0 = 1

What does this mean?

- Similarity = 0

- Distance = 1 (maximum)

So we say:

👉 These two words are NOT related at all.

Example:

- “king” and “mango”

- “happy” and “table”

| Angle | Cosine Similarity | Distance | Meaning |

| 0° | 1 | 0 | Same / very similar |

| 45° | ~0.7 | ~0.3 | Similar |

| 90° | 0 | 1 | Not related |

| 180° | −1 | 2 | Opposite meaning |

Why Cosine Similarity is IMPORTANT for Word2Vec

Word2Vec converts words into vectors.

But vectors alone are useless unless we can:

✅ compare words

✅ find closest words

✅ detect similarity

Cosine similarity does exactly this.

It helps to:

- Find similar words

- Do word arithmetic

- Recommend next words

- Power chatbots and search engines

Summary

- Word2Vec → words become vectors

- Cosine similarity → measures angle

- Small angle → similar words

- 90° → unrelated words

That’s how Word2Vec mathematically understands meaning.

How does Word2Vec get trained?

There are two main ways to train a Word2Vec model:

- CBOW (Continuous Bag of Words)

- Skip-Gram